In a previous article, we discussed CXL (Compute Express Link), a technology poised to revolutionize the future of data centers. To fully appreciate today’s content, we recommend reviewing that article first.

Semiconductor and artificial intelligence (AI) systems continue to evolve with a strong focus on increasing speed. Broadly speaking, there are two primary aspects of speed improvement: enhancing CPU and GPU processing speeds, and accelerating data transfer rates.

Today, I’d like to introduce Astera Labs Inc. (NASDAQ: ALAB), a semiconductor company directly involved in boosting both processing and data movement speeds. ALAB develops high-performance interconnect solutions for data centers, cloud computing, and AI infrastructure.

1. Company Overview

The rapid growth of AI and the accelerated cycle of AI platform development have created a demand for exponential computing power at cloud scale. To fully unlock the potential of cloud and AI infrastructure, purpose-built interconnect solutions are essential—and that’s exactly what ALAB offers. The company went public on March 20, 2024, and has a market capitalization of approximately $20 billion.

2. ALAB’s Products and Services

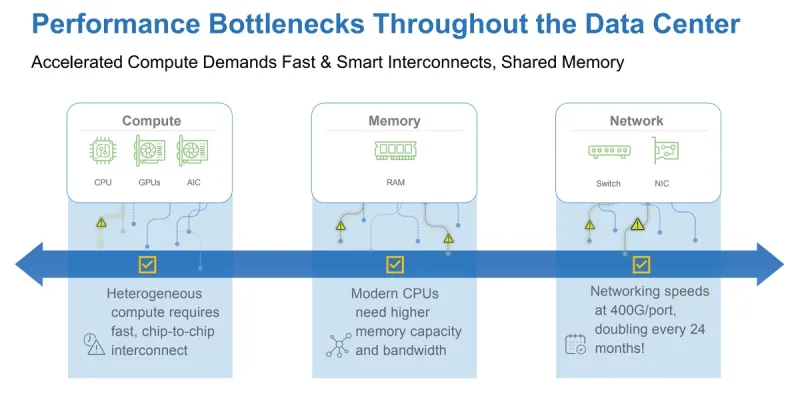

Astera Labs’ solutions are designed to eliminate three key bottlenecks in data centers:

- Compute bottlenecks

- Memory bottlenecks

- Network bottlenecks

▶ Semiconductor Products

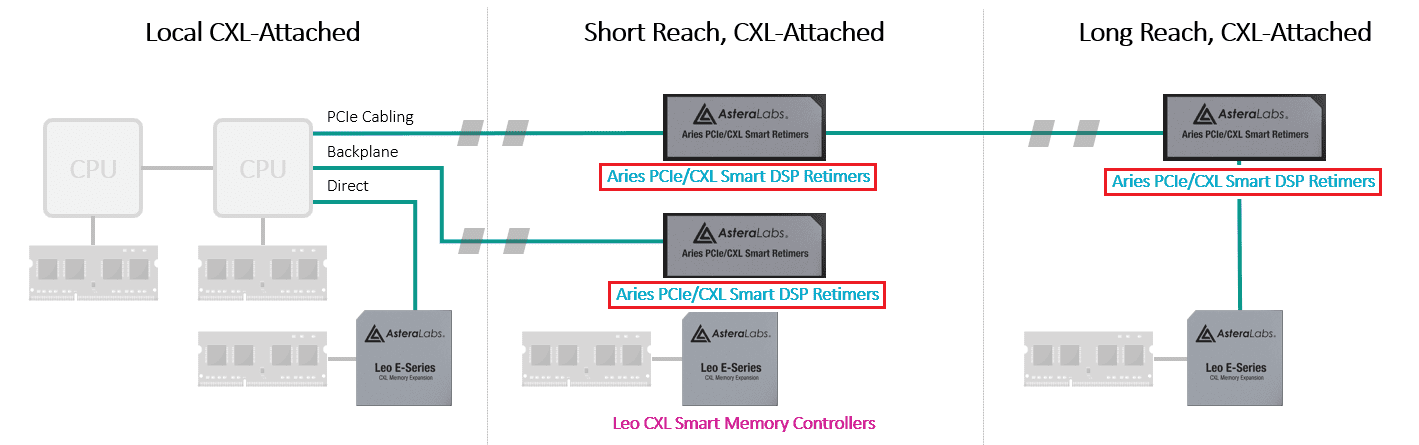

A. Aries PCIe/CXL Smart DSP Retimers

These devices extend the reach of PCIe (Peripheral Component Interconnect Express) and CXL (Compute Express Link) signals, improving data transmission between computer system components without degrading signal quality.

a. Why Retimers Matter

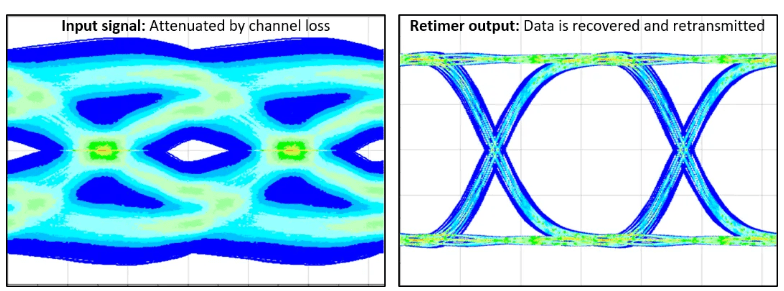

High-speed signals degrade over longer distances due to attenuation, distortion, reflection, interference, and noise. Retimer technology addresses these issues by regenerating or amplifying signals to restore original quality, effectively increasing the transmission range.

(Left) Signal without retimer / (Right) Signal with retimer

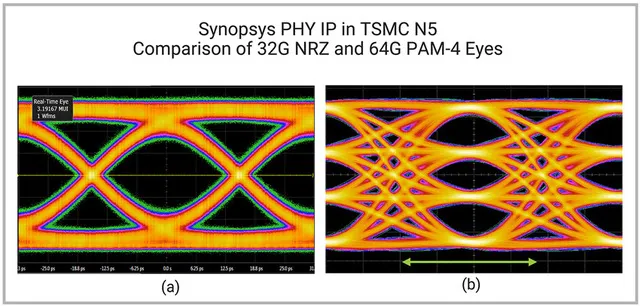

(a): PCIe 5.0 signal (b): PCIe 6.0 signal

The PCIe 6.0 signal (b) is twice as bandwidth-intensive as PCIe 5.0 (a), resulting in more complex signaling and increased chances of signal degradation and errors.

b. Key Features

-

Signal quality improvement: Aries Retimers can triple the connection range by mitigating PCIe/CXL signal quality issues. This is critical for complex server architectures.

-

Low power design: Aries 6 retimers consume just 11W in a typical 16-lane PCIe 6.x configuration—the industry’s lowest.

-

Integration with COSMOS (Connectivity System Management and Optimization Software): Offers diagnostics, enhanced security, and troubleshooting.

-

More retimers are needed as physical cable length increases.

c. Application Areas

- I/O and clustering for GPU/AI accelerators

- Connectivity among server CPUs, networks, storage, accelerators, and CXL memory

- Distributed computing via PCIe riser and add-in cards

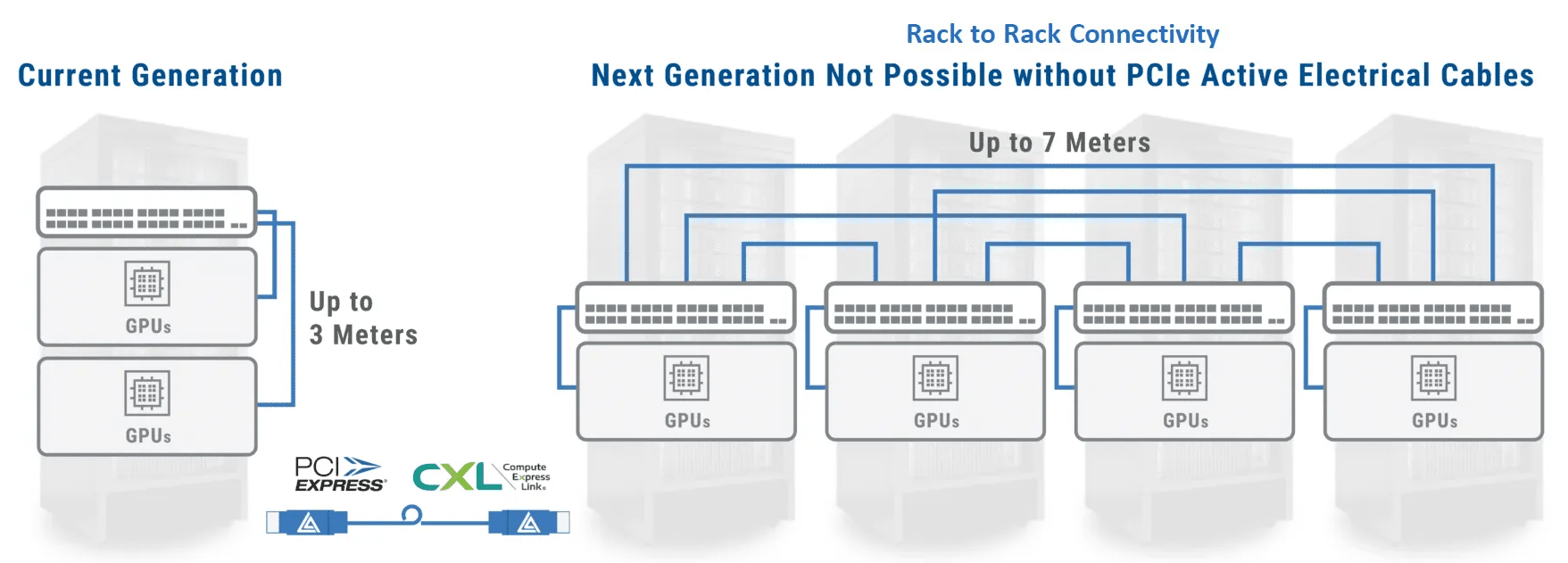

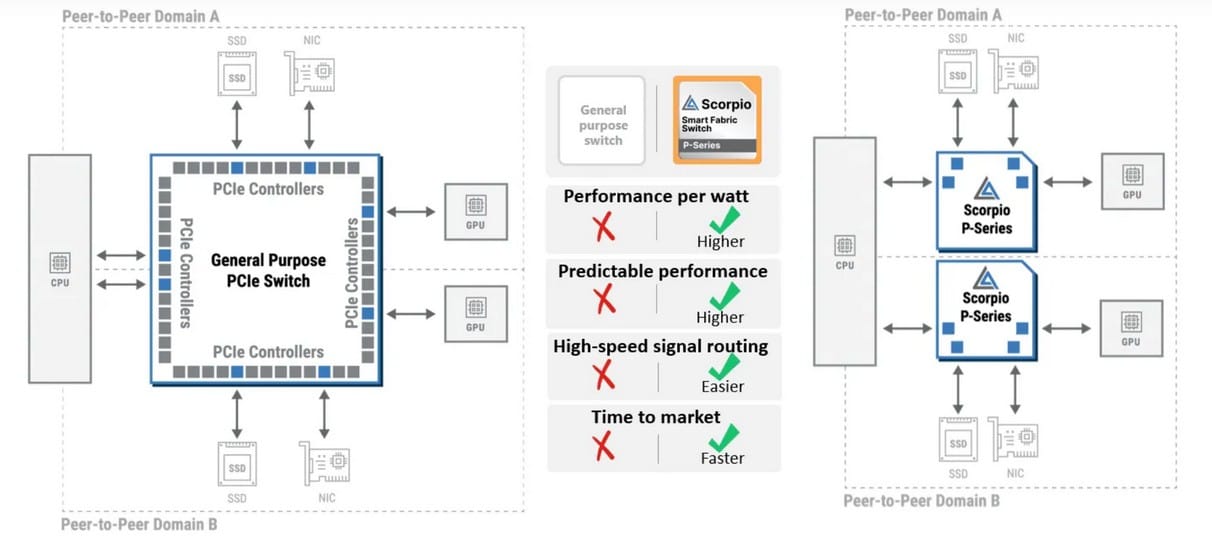

B. Aries PCIe/CXL Smart Cable Modules™

- These retimers also support extended interconnects across different PCBs or server racks. Packaged as Smart Cable Modules (SCMs), they enable longer intra-data center connections, including GPU clustering across multiple racks up to 7 meters.

- Supports various connection types: GPU-GPU, GPU-switch, GPU-CPU

Aries Smart Cable Modules enabling up to 7m inter-rack connections

Server rack image

C. Leo CXL Smart Memory Controller

a. Memory Solutions for the AI Era

- By enabling memory expansion, sharing, and pooling, this solution enhances AI and cloud infrastructure performance.

- It removes bandwidth/capacity bottlenecks, reduces total cost of ownership, and optimizes memory utilization.

- Offers top-tier standard security features ensuring end-to-end data integrity and protection

- Enables server-class RAS (Reliability, Availability, Serviceability) with advanced diagnostics and fleet management via COSMOS

b. Technical Superiority

- Industry-first solution supporting both memory expansion and pooling

- 50% higher memory bandwidth and 25% lower latency

- Supports DDR5 memory speeds up to 5600MT/s

- Divided into E-Series (expansion only) and P-Series (expansion + pooling) models

- Broad interoperability with major CPU, GPU, and memory vendors

c. Application Areas

- Cloud servers for AI and machine learning workloads

- Heterogeneous CPU, GPU, and accelerator servers

- General-purpose computing servers

Glossary

•Fabric

“Fabric” refers to the logical structure or system of interconnected network devices. This kind of structure is designed to enable efficient data communication and streamlined network management.

•Switch

A switch is a device that transmits data packets between networks or devices. It receives data through an input port and sends it to the appropriate output port. The main function of a switch is to efficiently manage data traffic and deliver it via the optimal path.

•AI Head Node

An AI head node serves as the “brain” of an AI cluster. It is the central server responsible for managing and orchestrating the entire AI system.

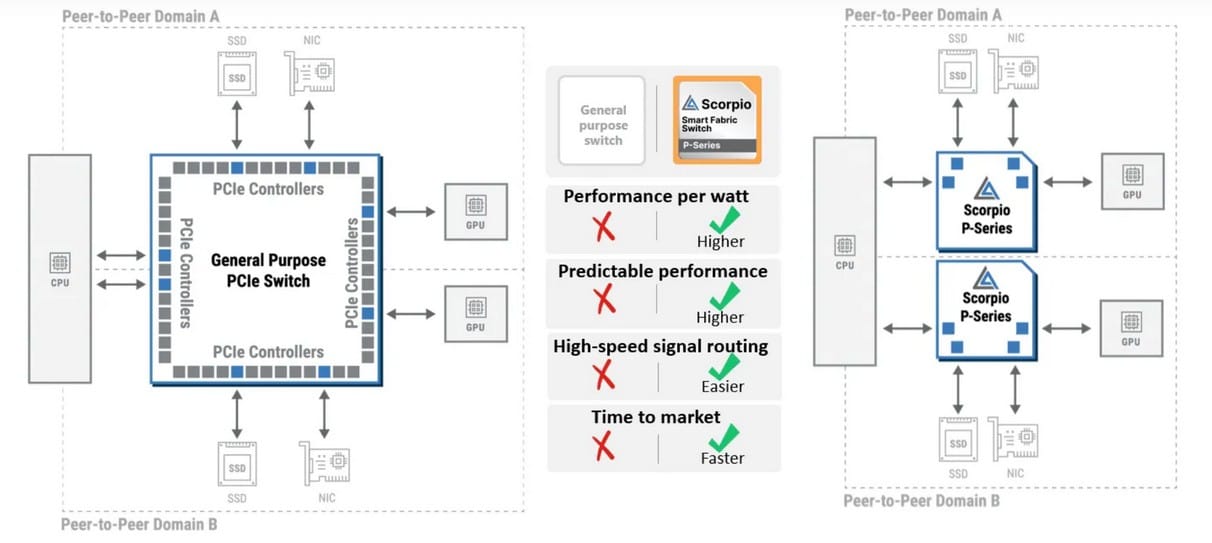

D. Scorpio Smart Fabric Switch

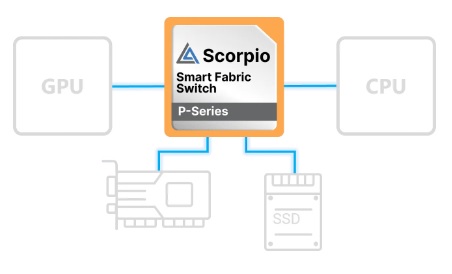

a. Scorpio P-Series Fabric Switch

- These are custom-built to connect AI head nodes and are optimized for high-performance AI workloads.

- Efficiently handles mixed traffic between GPUs, CPUs, NICs, and SSDs

- Controller optimized for AI workloads improves performance-per-watt

Example: Imagine a data center as a highway with heavy traffic during rush hour. Scorpio P-Series acts like a traffic officer, ensuring various types of data packets (cars, trucks, emergency vehicles) flow smoothly, minimizing delays and maximizing throughput.

-

Optimized controllers for AI workloads to enhance performance per watt

-

Delivers predictable performance through dedicated switching hardware

-

Modular GPU alignment design allows for easier high-speed signal routing, reduced crosstalk, and improved signal integrity

-

Specifically engineered for AI workloads to deliver top-tier performance and faster time-to-market

The Scorpio P-Series Fabric Switch is an innovative modular device that aggregates compute, network, and storage resources associated with GPUs/accelerators into a unified building block for data processing and scalability. These building blocks operate independently, delivering individual performance without impacting one another.

Each Scorpio P-Series Fabric Switch allocates and scales resources specifically tailored to individual GPUs. This design prevents resource contention that can occur when adjacent GPUs place uneven demands on the switch core. As a result, overall system efficiency and GPU utilization are significantly improved, delivering stable and highly predictable top-tier performance.

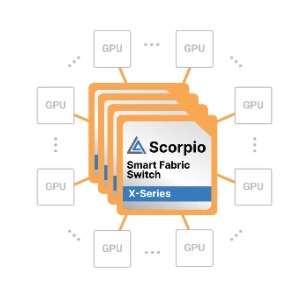

b. Scorpio X-Series Fabric Switch

① The Need for Expansion Due to the Growth of GPUs and Accelerators

With the explosive growth in the number of GPUs and accelerators, data centers now require scalable architectures. The industry is shifting from AI training-focused infrastructure to inference-oriented setups, driving the need for tailored accelerators. Hyperscalers are developing homogeneous and scalable connectivity solutions specifically designed for AI workloads.

② Role and Features of Scorpio X-Series Fabric Switches

- Designed to deliver the highest backend GPU-to-GPU bandwidth and enable platform-specific customization

- Improves bandwidth and latency through protocol enhancements, ensuring stable scaling of homogeneous GPU or accelerator fabrics

- Provides real-time insights via the COSMOS platform, enabling optimal user experience, maximum uptime, and improved return on investment (ROI)

E. Taurus Ethernet Smart Cable Module (Taurus Ethernet Smart Cable Module™)

Heterogeneous computing refers to the operation of different types of computer systems (such as servers, GPU trays, and storage systems) working together. As this model has become more widespread, data centers, cloud computing servers, and networks require significantly greater processing capacity and bandwidth. This has led to a dramatic increase in data movement between server and network components, such as between servers and storage or between servers and network equipment.

To address this growing data traffic, technologies have been implemented to increase port data transfer speeds from 25Gbps per lane to 100Gbps per lane. However, this advancement introduces a new challenge: a potential bottleneck between servers and switches, as they may not be equipped to handle such high speeds. Solving this issue requires appropriate hardware and network infrastructure.

Taurus Ethernet Smart Cable Module for Server-to-Switch Connections

a. Limitations of Existing Connection Solutions

① Passive DAC Cables (Direct Attach Copper, non-powered copper cables)

-

Effective reach is limited to 2 meters at 100Gbps per lane, creating distance constraints.

-

Bulky, stiff, and heavy, making them difficult to manage.

-

They restrict airflow, reduce cooling efficiency, and are nearly impossible to service in rack environments.

② AOC (Active Optical Cables)

-

Expensive to install and maintain.

-

High power consumption leads to increased operational costs.

-

Limited lifespan and reliability of optical modules require ongoing replacements and management.

③ Standard AEC Cables (Active Electrical Cables)

-

-

Lack advanced management features for effective monitoring or control.

-

b. Advantages of the Taurus Ethernet Smart Cable Module

-

Consumes 50% less power than AOC (Active Optical Cables).

-

Thin, flexible copper cables support extended reach up to 7 meters.

-

Integrated smart functions and COSMOS software ensure reliable data transmission.

-

Enhanced management capabilities offer advanced fleet (multi-rack) monitoring and in-depth diagnostics.

The Taurus module can be applied to high-bandwidth copper Ethernet cables, enabling flexible connections across various network components. This contributes to a smarter, more efficient network through “active” and “intelligent” cabling.

1. Aires PCIe/CXL Smart Cable Module (SCM): Designed for inter-rack server connections, this cable exceeds the traditional 2-meter limit and extends up to 3 meters with an active riser card to reduce transmission errors.

2. Taurus Ethernet Smart Cable Module: Supports up to 100Gb/s per lane Ethernet speed across multiple form factors designed for switch-to-switch and switch-to-server applications. Reach is extended up to 7 meters.

3. PCIe-over-Optical Solutions including AOCs: These solutions enable rack-to-rack connectivity using active optical cables (AOC) for PCIe signaling.

c. Applications

-

Switch-to-server connections

-

Switch-to-switch interconnects

-

High-density rack configurations for AI and machine learning workloads

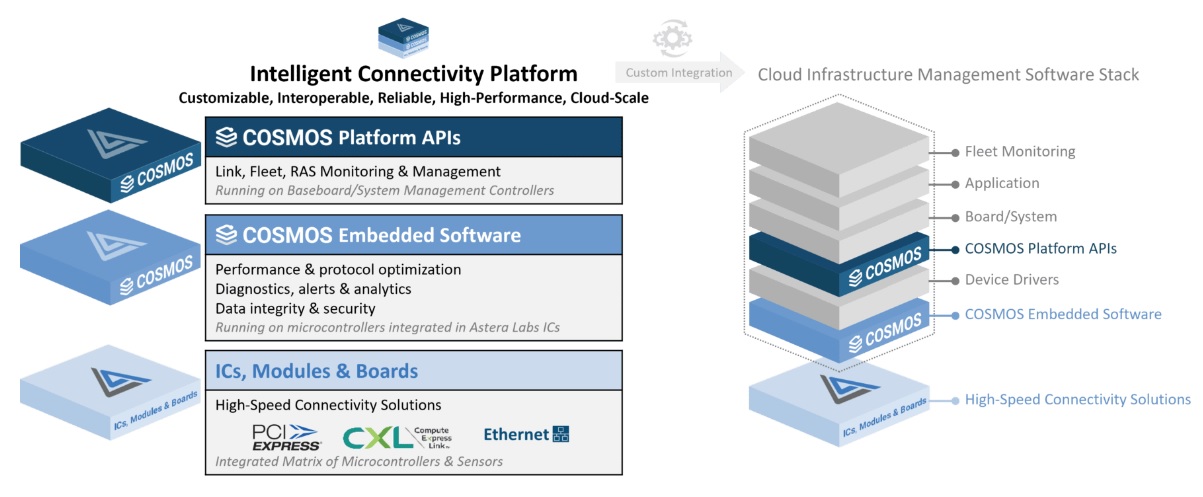

▶Software Component: COSMOS (Connectivity System Management and Optimization Software)

A. COSMOS: Core Platform Software Component

-

Link Management: Ensures secure and robust data communication between devices

-

Fleet Management: Enables real-time monitoring and predictive maintenance for server groups

-

RAS (Reliability, Availability, Serviceability): Offers error detection, reporting, and testing features

-

Provides telemetry data for automated fault correction and performance monitoring

COSMOS operates from IC on-chip microcontrollers and system management controllers, playing a key role in managing AI infrastructure at cloud scale.

B. Importance of COSMOS

-

Helps system administrators monitor connection status in real-time and respond swiftly to issues

-

Supports stable and efficient operations through optimized system performance

-

Reduces operating costs through automated management and optimization

-

Enables data-driven decision-making for optimal system operation

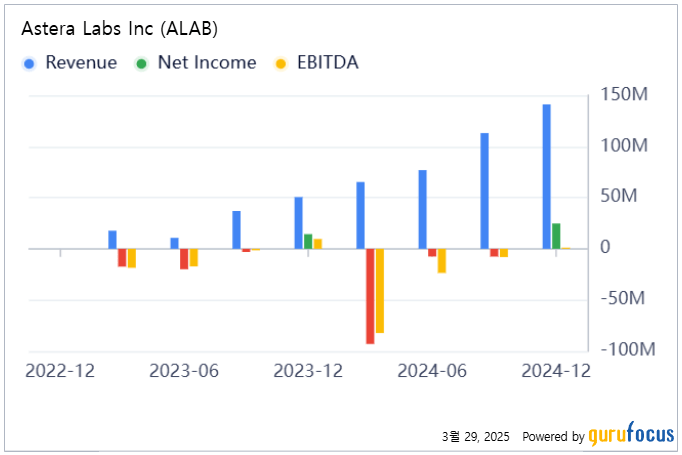

3. Financial Performance

A. Revenue

- Q1 2024: $65.3M

- Q2 2024: $76.9M (17.8% increase QoQ)

- Q3 2024: $113.09M (47% increase QoQ, 206% YoY growth)

- Q4 2024: $141.1M (25% increase QoQ, 179% YoY growth)

- FY 2024: $396.3M (242% YoY growth)

Astera Labs achieved record-breaking revenue every quarter in 2024, culminating in an all-time high quarterly revenue of $141.1M in Q4.

B. Profitability

a. GAAP Basis

- Q1 2024: Operating loss of $80.4M, net loss of $93M

- Q2 2024: Operating loss of $24.3M, net loss of $7.55M

- Q3 2024: Operating loss of $8.9M, net loss of $7.6M

- Q4 2024: Operating profit of $0.14M, net income of $24.71M

- FY 2024: Operating loss of $116.07M, net loss of $83.42M

b. Non-GAAP (Q4 2024)

- Operating profit: $48.4M

- Operating margin: 34.3%

- Net income: $66.5M

- EPS (Earnings Per Share): $0.37

A noteworthy achievement is that Astera Labs posted its first GAAP-operating profit in Q4, indicating gradual improvement in profitability alongside revenue growth.

C. Growth Trends and Key Achievements

Astera Labs maintained strong growth momentum throughout 2024 and accomplished the following:

a. Product Diversification

- Growth in 2024 was primarily driven by the Aries PCIe Retimer product line, while Taurus Smart Cable Modules for Ethernet also showed strong performance in Q4.

b. Scorpio Smart Fabric Switches

- Introduced a new portfolio for AI infrastructure, currently shipping in pre-production volumes.

c. Technological Innovation

- Launched the industry’s lowest power PCIe 6.x/CXL 3.x retimer solutions.

- Demonstrated PCIe 6.x switch-to-SSD interoperability at DesignCon 2025, achieving sequential read speeds over 26GB/s.

- Shipped Aries PCIe/CXL Smart Cable Modules featuring flexible copper cables with 7-meter reach.

d. Industry Collaboration

- Participated as a board member of the Ultra Accelerator Link Consortium, contributing to the development of UALink technology for high-speed interconnects among AI accelerators within large-scale AI clusters.

D. Outlook for 2025

Astera Labs expects Q1 2025 revenue to be between $151M and $155M, with a projected GAAP gross margin of approximately 74%.

CEO Jitendra Mohan stated, “2025 is expected to be a breakthrough year, with all four product families generating revenue across diverse customers and platforms.” Notably, the Scorpio Fabric product for GPU-CPU connectivity and backend AI accelerator clustering is expected to lead growth.

Backed by its strong position in the AI infrastructure market, Astera Labs is poised to sustain its growth trajectory into 2025.

4. Risk Factors

A. Market and Competition Risks

- Intense Competition: Competes with larger firms like Intel, AMD, and Broadcom, which may limit Astera Labs’ growth due to their greater resources and market power.

- Technological Shifts: Rapid changes in semiconductor technology could leave the company behind if not quickly addressed.

- Customer Concentration: Heavy reliance on a small number of major cloud service providers and data center operators poses a risk if any customer exits.

B. Financial and Operational Risks

- Profitability Challenge: Astera Labs has yet to demonstrate sustained profitability.

- Supply Chain Vulnerability: Semiconductor production depends on complex global supply chains that may be disrupted by geopolitical or logistical issues.

- Talent Retention: Maintaining skilled technical talent is vital; talent loss could negatively impact innovation and competitiveness.

5. Future Vision and Outlook

A. Innovative Technology

-

Astera Labs differentiates itself through its Intelligent Connectivity Platform, which provides link management, fleet management, and RAS functionality.

-

This software-defined architecture offers tailored diagnostics and telemetry to manage and optimize diverse systems.

B. Strategic Market Positioning

- Effectively leveraging growth in AI and cloud computing markets

- Building direct relationships with key AI GPU vendors and hyperscalers like NVIDIA and AWS

- Participating in the UALink Consortium board to influence industry standards and expand influence in the AI platform space

C. Continuous Innovation

- Investing in future technologies to maintain competitive edge

- Developing new product lines such as Scorpio and PCIe Gen 6.0 to meet evolving market demands

- Significant R&D investments, including the establishment of a new R&D center in Bangalore, India, accelerating global innovation efforts

D. Product-Specific Growth Outlook

a. Aries PCIe/CXL Smart DSP Retimer

-

- Currently deployed in over 80% of AI servers

- Early shipments of Aries 6 Retimers are underway, with increasing adoption among major hyperscalers like NVIDIA

- High backlog to support early deployment of AI servers with NVIDIA Blackwell GPUs

- Supports PCIe 6.0 and CXL 3.x connectivity, enabling low-power, high-performance links among GPUs, accelerators, CPUs, NICs, and CXL memory controllers

b. Taurus Smart Cable Module (SCM)

-

- Deployed in 400Gb Ethernet applications, meeting both general-purpose computing and AI server needs

- Demand is expected to rise as data transfer speeds and system complexity increase

c. Scorpio Smart Fabric Switch

-

- Projected to contribute over 10% of revenue in 2025

- Tailored for AI infrastructure, offering cloud-scale connectivity and attracting strong market interest

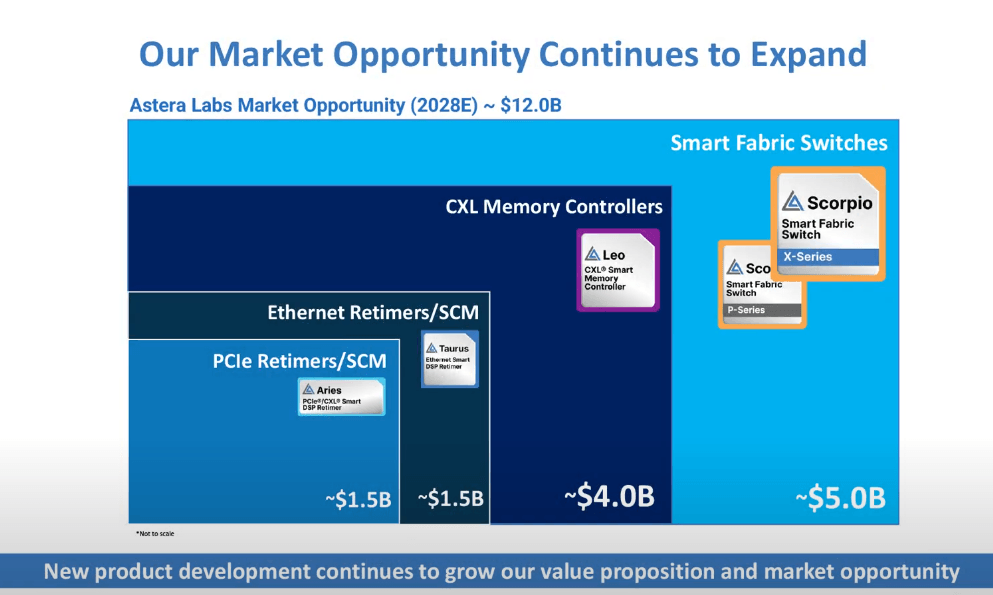

E. Market Expansion and Technology Advancement

- Total addressable market projected to exceed $12B by 2028, driven by rapid AI and cloud infrastructure growth

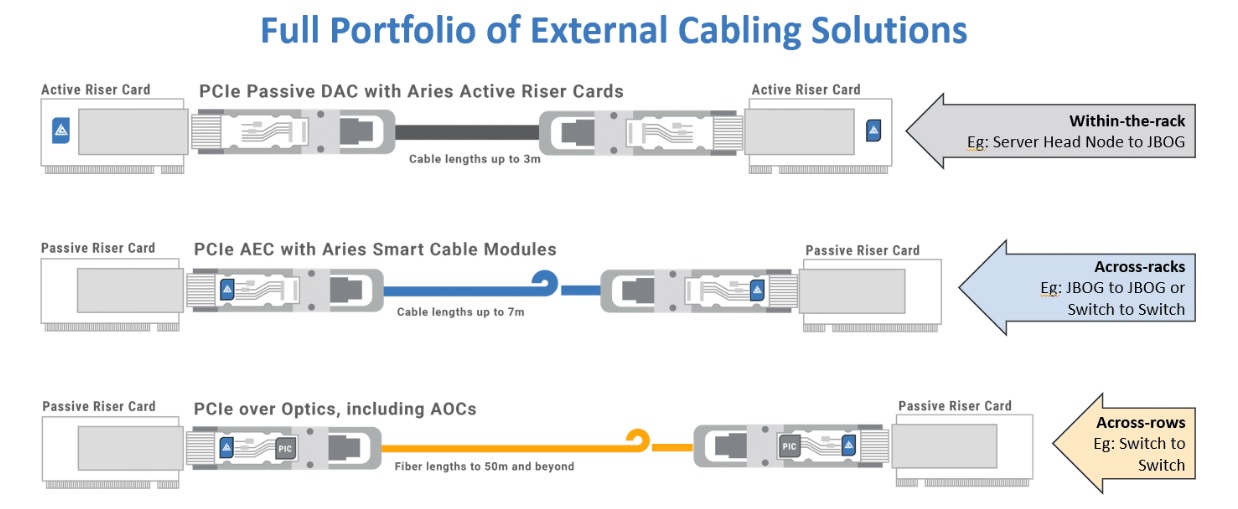

- Demonstrated PCIe over Optics technology, enabling signal transmission beyond 50 meters, significantly enhancing the scalability and usability of AI accelerator clusters

6. Relationship Between NVIDIA’s NVLink and CXL

A. Definition of NVIDIA NVLink and Its GPU Market Share

- NVLink is NVIDIA’s proprietary high-speed interconnect technology, designed to deliver high bandwidth and low-latency communication between GPUs or between GPUs and CPUs.

- NVIDIA holds a significant market share in the data center, artificial intelligence (AI), and machine learning (ML) sectors, which has led to increased usage of NVLink in these domains.

B. Limitations and Vendor Lock-In of NVLink

-

Vendor Lock-In: NVLink is a proprietary technology exclusive to NVIDIA, and is only compatible with systems that use NVIDIA GPUs.

-

Compatibility Limitations: Direct NVLink connections with CPUs, accelerators, or memory devices from other vendors are restricted.

-

Scalability Issues: NVLink is optimized for intra-system connectivity and may face challenges when scaling across large, distributed systems.

C. Industry Standardization and Broad Support for CXL

-

CXL (Compute Express Link) is an open interconnect standard developed by an industry consortium to ensure interoperability across hardware from multiple vendors.

-

Major companies including Intel, AMD, Arm, Samsung, Microsoft, Google, and Dell are members of the CXL Consortium.

-

NVIDIA has also joined the CXL Consortium and contributes to the advancement of CXL technology.

-

CXL supports efficient connections between CPUs, memory, and a variety of accelerators (GPUs, FPGAs, ASICs), enabling flexibility in heterogeneous computing environments.

D. Possibility of Coexistence Between NVLink and CXL

-

NVLink is optimized for ultra-high-speed communication within and between NVIDIA GPUs, offering superior performance within NVIDIA GPU clusters.

-

CXL focuses on resource connectivity and memory coherence across systems, supporting integration of diverse devices.

-

NVIDIA continues to leverage NVLink for peak GPU performance while also moving toward greater system-wide compatibility and scalability via CXL.

-

This indicates that NVLink and CXL are more likely to coexist and complement each other, rather than directly compete.

E. Standardization Trends and CXL’s Strengths

-

CXL, as a vendor-neutral open standard, can be adopted across a broad range of hardware and software ecosystems.

-

Open standards empower developers and manufacturers to build richer ecosystems, promoting innovation and progress.

-

Memory-Centric Computing: CXL enables memory pooling and sharing, supporting future computing architectures.

-

CXL continues to evolve through upgrades such as CXL 3.0, improving its performance and capabilities as a next-generation interconnect technology.

F. Why NVLink May Struggle to Become an Industry Standard

-

The industry tends to prefer open standards over vendor-specific technologies.

-

In data centers and hyperscale computing environments, a mix of hardware from various vendors is common, making compatibility and interoperability essential.

-

Although NVIDIA holds a dominant share in the GPU market, competitors like AMD and Intel are also actively investing in this space.

Conclusion

NVLink will continue to play a vital role within NVIDIA’s GPU ecosystem. However, when considering broader industry needs for interoperability, flexibility, and scalability, the importance and necessity of CXL are increasing. NVIDIA recognizes this trend and is expanding its support for CXL through active participation in the consortium.

Therefore, it would be premature to assume that NVLink’s growth undermines the future of CXL. The two technologies can coexist, complementing each other, and the industry is expected to build a more robust and flexible ecosystem through open standards like CXL.

7. Competitive Dynamics Between NVIDIA’s NVLink and the Scorpio X-Series Fabric Switch

Both technologies are designed to enable high-bandwidth communication between GPUs to support GPU scale-up architectures.

A. Competitive Focus: “GPU Scale-Up” Solutions

Both aim to solve the same core challenge—reducing communication latency between GPUs and expanding bandwidth to enhance computational performance.

-

NVIDIA NVLink is a closed interconnect technology tailored for its own GPUs.

-

Astera Labs’ Scorpio, by contrast, is an open fabric switch that uses standard interfaces like PCIe and CXL.

This creates a strategic choice for system architects: -

Should they build a large-scale system entirely around NVIDIA?

-

Or should they opt for an open fabric with multi-vendor, heterogeneous accelerators in mind?

B. Ecosystem and Deployment Scenarios

-

NVIDIA DGX/HGX Reference Design: These systems use GPUs (like A100 or H100) with NVLink and NVSwitch integrated, offering simplified deployment, optimized performance, and full compatibility with NVIDIA’s software stack (CUDA, libraries, NGC, etc.).

-

Open Data Center/Cloud Infrastructure: Hyperscalers and enterprises looking for more flexible environments—mixing AMD GPUs, Intel GPUs, FPGAs, or AI ASICs—can use open standard fabric switches like Scorpio to support multi-vendor setups and adopt new technologies like CXL memory sharing more easily.

C. Performance & Scalability vs. Flexibility & Open Standards

-

NVLink offers best-in-class performance in bandwidth and latency when connecting only NVIDIA GPUs, due to its tight integration with GPU architecture.

-

Scorpio, based on PCIe/CXL fabric rather than direct GPU links, may not yet match NVLink in raw speed or latency (though this gap is narrowing with PCIe 5.0 and CXL 2.0+).

-

Scorpio’s key strength lies in its flexibility—the ability to link various types of GPUs, accelerators, and servers into a unified fabric. For deployments not limited to NVIDIA GPUs, this provides significant advantages in terms of future scalability and operational agility.

D. Outlook

-

NVIDIA continues to evolve NVLink and NVSwitch to strengthen its GPU ecosystem, and demand for its DGX/HGX stack will likely grow as large-scale AI model training needs increase.

-

Meanwhile, as disaggregation of data center resources and CXL-based memory/accelerator sharing expand, open fabrics like Scorpio are expected to play a larger role.

-

Particularly in environments that prefer open interfaces or need to combine GPUs and AI ASICs from multiple vendors, products like Scorpio can greatly enhance infrastructure flexibility.

Ultimately, in the large-scale AI/HPC market, the “NVIDIA single-vendor stack (NVLink)” versus “open fabric (Scorpio, etc.)” paradigm will continue to be a focal point of competition.

Disaggregation: A technical concept that enables the independent management and operation of resources (CPU, memory, storage, GPU, etc.) that were traditionally integrated into a single system or device.

8. Two-Minute Pitch

Peter Lynch emphasized the importance of being able to deliver a two-minute pitch about a stock you are interested in, including the reasons for buying it, the company’s prospects, and its story. If you have thoroughly researched and can confidently articulate this two-minute pitch, you may consider purchasing the stock.

(Note: All investment decisions and responsibilities rest solely with you. Always invest with surplus funds and focus on long-term investments.)

With the rapid rise of AI and the accelerated design cycles of AI platforms, there is an exponential need for cloud-scale compute power. To fully unlock the potential of cloud and AI infrastructure, purpose-built connectivity solutions are essential.

ALAB is a company that develops these specialized connectivity solutions.

ALAB’s products are designed to eliminate three major data center bottlenecks:

-

Heterogeneous computing bottlenecks

-

Chip-to-chip computing bottlenecks

-

Memory capacity/bandwidth and network speed bottlenecks

ALAB’s product lineup includes:

-

Aries PCIe/CXL Smart DSP Retimers

-

Aries PCIe®/CXL® Smart Cable Modules™

-

Leo CXL® Smart Memory Controller

-

Scorpio Smart Fabric Switch

-

Taurus Ethernet Smart Cable Module™

In the first three quarters of 2024, ALAB achieved consecutive quarterly revenue records, highlighted by a remarkable 206% year-over-year growth in Q3. This success is primarily driven by the strong market presence of Aries 6 and the increasing demand in the AI and cloud infrastructure markets.

Despite this momentum, ALAB faces market and competitive risks, as well as financial and operational challenges.

Nonetheless, with its innovative technologies and Intelligent Connectivity Platform, ALAB is strategically positioned to capitalize on the growth of the AI and cloud computing markets. Its continuous innovation underscores a promising future outlook.

NVLink and CXL are not necessarily in direct competition—in fact, they are likely to evolve in a complementary direction.

On the other hand, NVIDIA’s NVLink and Astera Labs’ Scorpio X-Series do represent a competitive dynamic. While NVLink holds substantial influence thanks to NVIDIA’s expanding AI/HPC ecosystem, the diversification of GPU/accelerator architectures and the rise of the CXL era will increase demand for open fabric switches like Scorpio. As a result, the competitive tension between these two technologies is expected to remain a key topic in future data center architectures.

0 Comments