It’s no exaggeration to say that 2024 was the year of Artificial Intelligence (AI). Among many stocks, NVIDIA drew particular attention as its share price soared. The development of AI is expected to continue in 2025. However, because NVIDIA’s market capitalization has already grown significantly, it’s unlikely to multiply several times over as it did from 2022 to 2024.

As someone searching for “tenbagger” opportunities (stocks that could return ten times the original investment), I’m now looking for companies with relatively small market caps that could still benefit from the continued growth of AI. One recent example is Broadcom, whose stock price rose substantially due to its development of ASICs (Application-Specific Integrated Circuits). There are many other semiconductor stocks with similar ties to AI.

However, the challenge with investing in AI semiconductors is that it’s difficult for investors unfamiliar with semiconductors to fully understand these companies’ business models and technologies. Warren Buffett of Berkshire Hathaway is famously known for avoiding investments in companies he doesn’t fully understand. For long-term investors pursuing tenbagger opportunities, a deep understanding of the company is essential to build confidence in the investment. While Mr. Buffett may find tech stocks difficult due to his age, those of us who are younger can make the effort to understand them to a reasonable extent.

In preparing to introduce some AI-related semiconductor companies, I did a great deal of studying. I wrote this article to explain some of the concepts and terms that I initially found difficult or unfamiliar. I’ll include plenty of examples and definitions throughout, so that you can understand them too. Even if it’s a bit challenging, I hope you’ll follow along at your own pace.

Semiconductors and the Direction of AI System Development

A. Key Goals in the Development of Semiconductors and AI Systems

At a high level, the development of semiconductors and AI systems is aimed at improving the following:

-

Speed

-

Efficiency

-

Heat reduction

-

Lower installation and maintenance costs

These four elements represent the core objectives in advancing semiconductor and AI technologies. As AI models become larger and more complex, processing speed becomes even more critical. Technological advancements are largely focused on improving speed.

B. Types of Speed Enhancements

-

Computational speed improvement: The ability of semiconductors such as CPUs and GPUs to perform calculations more quickly.

-

Data transfer speed improvement: The ability for data to move faster within a system.

These two factors play a major role in determining the overall performance of AI systems. Modern AI systems must process vast amounts of data rapidly, making data transfer speed a key performance factor.

Glossary (1)

-

Cloud Computing

-

Storage

-

Memory

-

Cache

-

Database

-

Interconnect Solution

•Cloud Computing

Cloud computing refers to the delivery of computing services—such as servers, storage, databases, networks, and software—over the internet (the “cloud”). This allows users to access computing resources on-demand without the need to purchase or maintain physical hardware.

• Storage

Storage refers to the physical or virtual space used to store data. Simply put, it’s the place where data is kept. Examples include a computer’s hard drive, external drives, or cloud storage services.

• Memory

Understanding memory is critical when discussing semiconductors and AI.

Memory refers to devices within a computer system that temporarily store and access data. RAM (Random Access Memory) is one example—it stores data temporarily for tasks currently being executed.

Memory plays a vital role in system performance. The speed at which data is read from memory, how fast the CPU can access data from memory, and the overall memory capacity all have a significant impact on system speed and stability.

Analogy: Comparing Memory and Storage

Cutting Board: Memory

- A cutting board is where you prepare ingredients during cooking. Only the ingredients currently in use are placed here.

- Similarly, RAM holds data that’s actively being used so the computer can access and process it quickly.

Refrigerator: Storage

- A refrigerator stores ingredients long-term until they’re needed for cooking.

- Likewise, storage devices like HDDs or SSDs retain data over time—documents, photos, videos, etc.

Example: How a computer handles data (cooking analogy)

a. Preparation process:

- You take ingredients from the fridge and place them on the cutting board.

- As you cook, you chop and use the ingredients.

- If you need more, you go back to the fridge.

b. This mirrors computer behavior:

- The computer retrieves data from storage and loads it into RAM.

- While working, the data in RAM is processed rapidly.

- More data is fetched from storage as needed.

c. Efficiency:

- A large cutting board lets you handle more ingredients at once, speeding up cooking.

- Similarly, larger/faster RAM allows a computer to process more data simultaneously.

- A small cutting board forces frequent trips to the fridge, slowing things down—just like insufficient RAM slows computer operations.

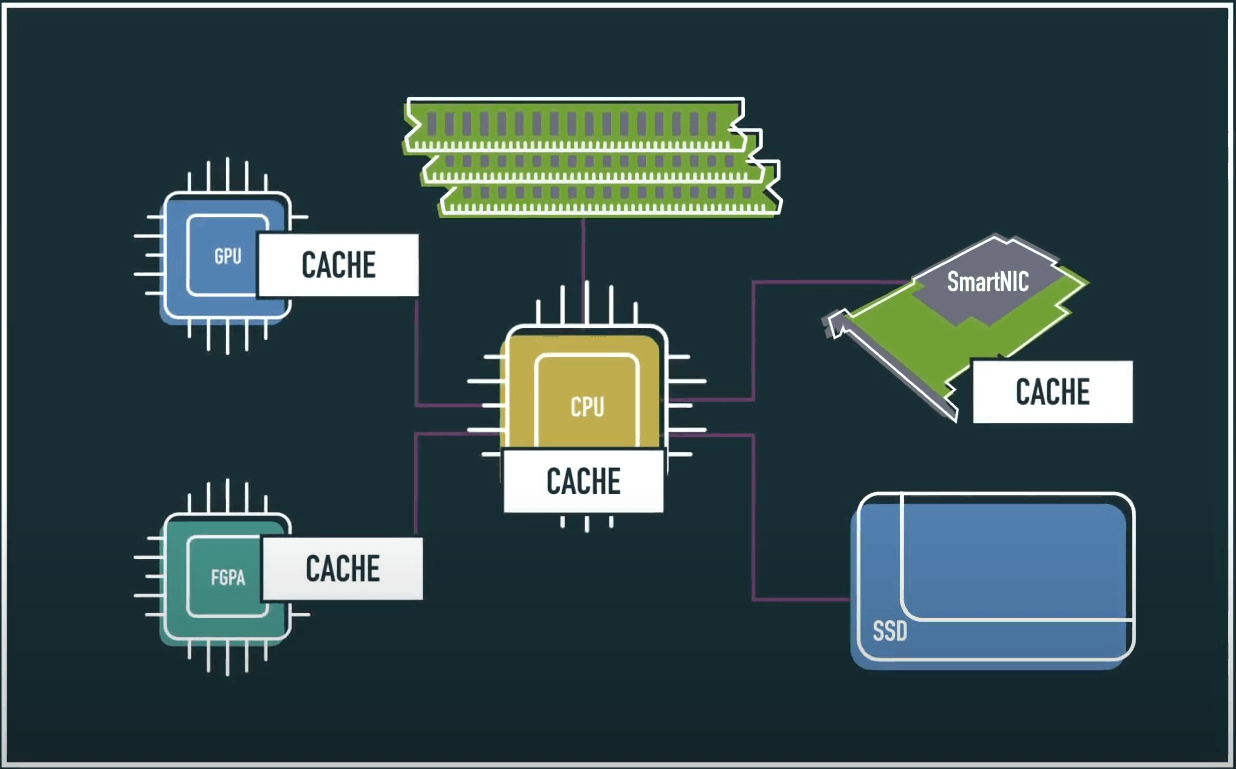

• Cache

A cache is a small, high-speed storage unit that temporarily holds frequently used data for the CPU or other processors. Caches are faster and have shorter access times than main memory (RAM), helping improve overall system performance.

Memory access speed hierarchy:

Storage < RAM < Cache < Register

• Database

A database is a system designed to store and manage data in an organized way, making it easy to search and access. For example, your smartphone contacts app stores names and phone numbers using a database.

• Interconnect Solution

An interconnect solution refers to the technologies and methods used to transmit data and maintain connectivity between various systems, networks, and devices.

Glossary (2)

-

PCIe (Peripheral Component Interconnect Express)

-

Ethernet

-

Workload

-

Bandwidth

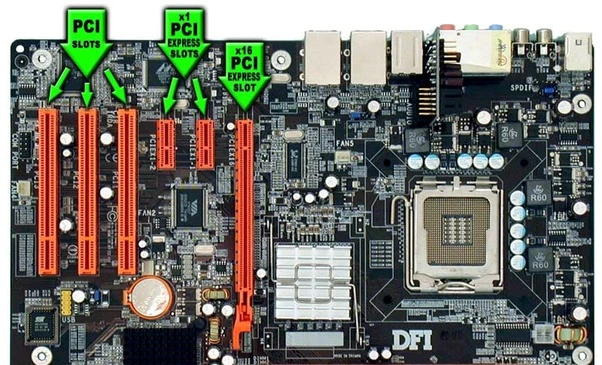

• PCIe (Peripheral Component Interconnect Express)

PCIe is a high-speed interface used to connect internal components within a computer, enabling fast data exchange between them. Think of it as a highway that lets different computer parts work efficiently together.

Common uses:

-

Graphics Card (GPU): Typically uses an x16 slot (16-lane highway).

-

SSD: High-speed NVMe SSDs often use x4 slots.

-

Network Card: Generally uses x1 slots, which are sufficient for network data transfer.

Example: Highway analogy for PCIe

-

Highway lanes = PCIe lanes

-

The number of lanes (x1, x4, x8, x16) indicates how many data paths exist.

-

More lanes = more data transferred at once (e.g., PCIe x16 = 16-lane highway).

-

-

Speed limit = PCIe generation

-

PCIe generations (Gen 1, 2, 3, 4…) increase speed limits.

-

Newer generations support faster data transfer (e.g., PCIe 5.0 x16).

-

A typical PCI slot on a computer motherboard

• Bandwidth

Bandwidth refers to the maximum amount of data that can be transmitted over a network or connection in a given period of time. As mentioned earlier, AI systems are evolving toward higher speeds, and increasing bandwidth is a part of that trend.

Example: Water pipe analogy for bandwidth

-

A thick pipe can carry more water quickly.

-

Similarly, higher bandwidth allows more data to move rapidly.

• Ethernet

Ethernet is a widely-used networking technology that allows computers and other devices to communicate within a local area network (LAN).

Key roles:

-

Data transmission between connected devices

-

Communication rules (protocols) that ensure secure and efficient data transfer

• Workload

In AI and machine learning, a workload refers to the set of tasks a system performs. Common components include:

-

Data processing/preprocessing: Cleaning and preparing large datasets

-

Model training: Using data to train ML models

-

Inference: Making predictions based on trained models

-

Computation: Running complex mathematical operations and algorithms

cashe coherence

B. Why CXL Was Developed

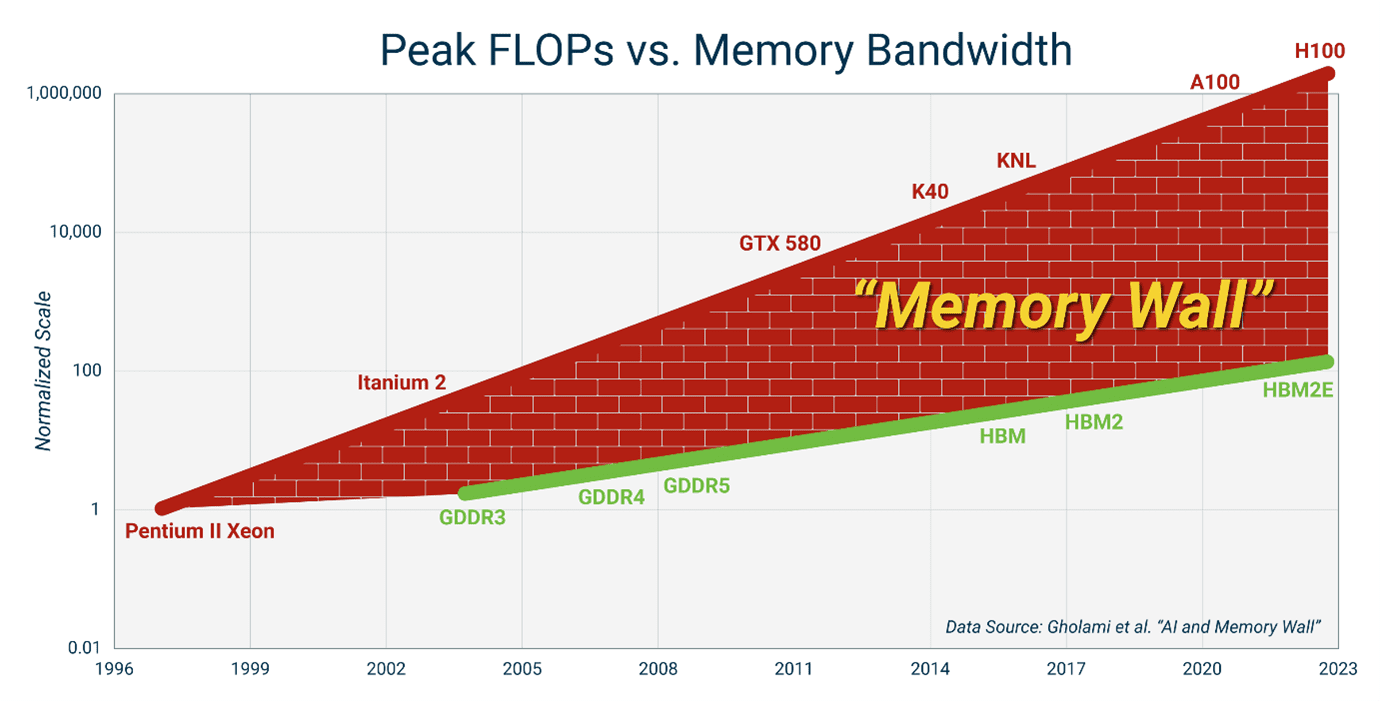

a. Rising Data Demands from AI and Big Data

-

-

With the explosive growth in AI and big data technologies, the volume of data to be processed has skyrocketed.

-

Traditional memory architectures struggle to handle this scale efficiently.

-

b. Limitations of Existing Memory Systems

-

-

Capacity limits: In current server systems, each CPU can only support up to 16 DRAM modules, capping memory at around 8TB—insufficient for rapidly growing data needs.

-

Memory wall: The processor’s performance outpaces the memory subsystem’s bandwidth, creating a bottleneck that limits overall system performance.

-

Inefficient data transfer: Accessing data from memory through multiple interface layers is slow and inefficient.

-

(Visual: A graph showing GPU performance consistently outpacing memory performance)

c. Need for Greater System Efficiency

-

-

In data centers and high-performance computing environments, there’s a growing need for technologies that overcome memory bottlenecks and enhance overall system efficiency.

-

This led to the development of CXL as a solution that enables efficient interconnectivity and flexible memory expansion across CPUs, GPUs, memory, and other computing devices.

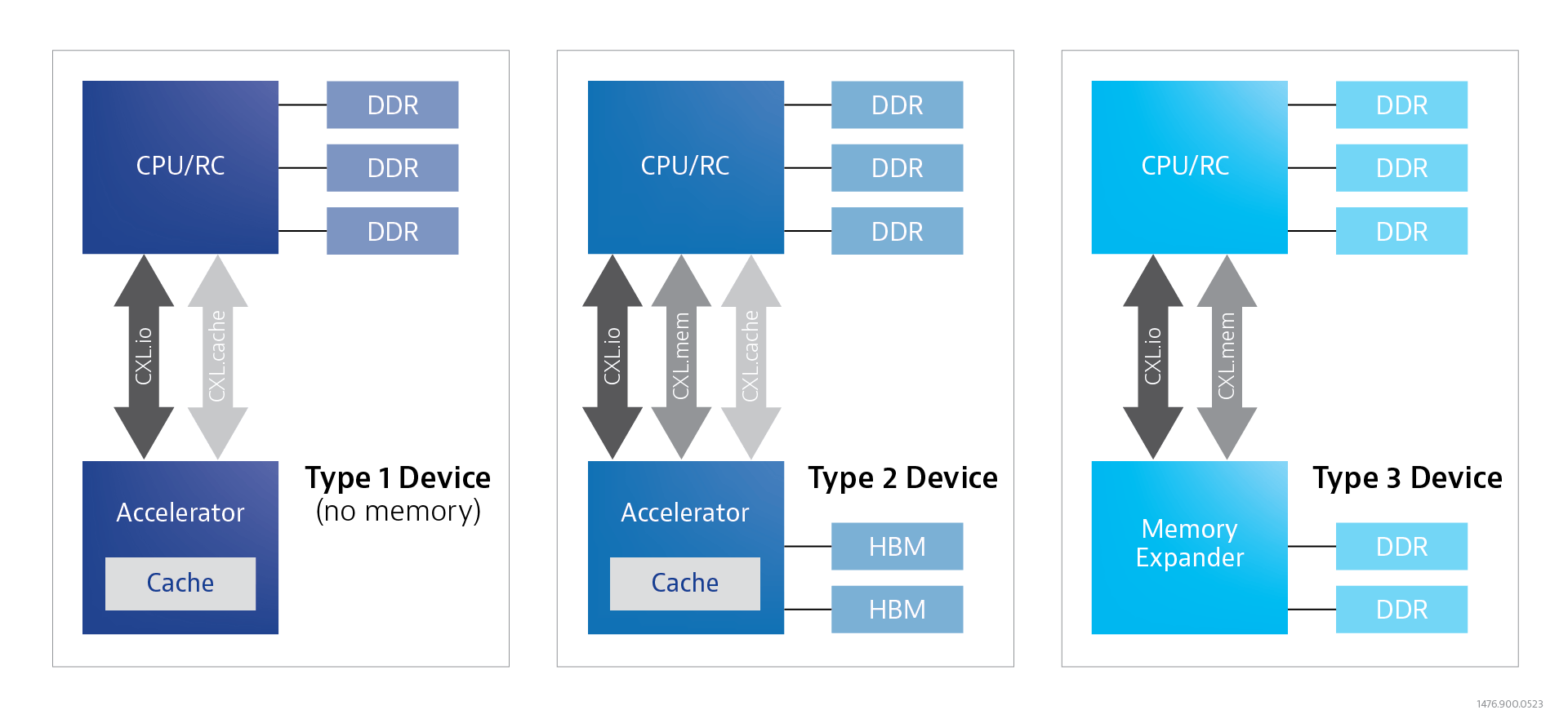

C. CXL Protocols and Standards

CXL consists of three main protocols that work together to greatly enhance high-performance computing systems:

a. CXL.io – Similar to PCIe

-

-

Function: Handles standard input/output (I/O) data transmission between the CPU and other devices.

-

Role: Works like PCIe, offering wide bandwidth and low latency data transfer.

-

b. CXL.cache – Cache Coherence

-

-

Function: Facilitates cache sharing between CPUs and accelerators.

-

Role: Reduces memory access times and prevents bottlenecks by enabling fast cache access.

-

c. CXL.memory – Memory Coherence, Pooling & Sharing

-

-

Function: Enables memory expansion and sharing across devices.

-

Role: Allows seamless memory access between CPUs and accelerators, ideal for large-scale data processing.

-

By combining these protocols, CXL enables consistent memory sharing between host CPUs and computing accelerators like AI processors, simplifying programming and improving performance.

Device Types using CXL Protocols:

-

Type 1 Devices: CXL.io + CXL.cache

-

Type 2 Devices: CXL.io + CXL.cache + CXL.memory

-

Type 3 Devices: CXL.io + CXL.memory

D. Memory Coherence and Memory Pooling/Sharing

a. Memory Coherence

-

Definition: Ensures that multiple processors or devices accessing the same memory address always see the same data value.

-

Purpose: Prevents data inconsistency, enabling correct and synchronized operations across all computing units.

Example 1:

If one processor writes data to a memory location, all others must read the updated value.

Example 2 (Collaborative Editing Analogy):

Imagine multiple people editing a shared document. Each person’s edits are instantly visible to others, preventing conflicts. Real-time sync ensures everyone sees the same version, preserving data consistency.

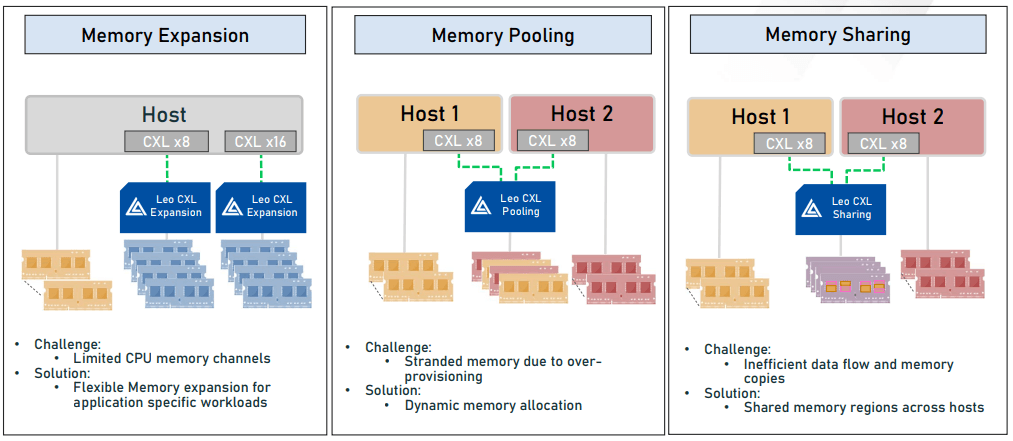

b. Memory Pooling and Sharing

-

Definition: Allows multiple devices (like GPUs, FPGAs, NICs) to access and use a shared pool of physical memory.

-

Purpose: Improves resource efficiency by dynamically allocating memory to devices as needed.

Example:

Instead of giving each device its own memory block, they all draw from a large shared pool. This reduces waste and maximizes performance.

E. Core Objectives of CXL Technology

-

Performance Improvement

CXL dramatically boosts communication speeds between CPUs, GPUs, memory, and storage. This translates to faster data processing and overall system performance gains. -

Memory Scalability

CXL enables systems to expand memory capacity by 5 to 10 times, which is crucial for AI and big data applications that require vast memory resources. -

Resource Efficiency

CXL allows for shared memory pooling across multiple servers, significantly increasing data center resource utilization. -

Cost Reduction

By improving memory efficiency and enabling sharing, CXL helps cut data center operating costs.

F. Application Areas of CXL Technology

a. Artificial Intelligence & Machine Learning

-

Supplies the large memory volumes needed for training and running large language models.

-

Enhances AI workload performance through efficient memory sharing between CPU and GPU.

-

Supports memory-intensive applications like vector databases.

b. Big Data Analytics

-

Provides large-scale, in-memory databases for real-time analytics.

-

Expands memory bandwidth for complex data processing tasks.

c. Data Centers

The data center is perhaps the most suitable environment to leverage CXL.

c.1 Memory Expansion and Pooling

-

CXL allows for dramatic expansion of memory capacity and bandwidth in data centers.

-

Overcomes limitations of traditional DDR memory.

-

Enables memory pooling across multiple servers, improving utilization.

-

Memory resources can be shared at the data center level for maximum efficiency.

c.2 Performance Enhancement

-

CXL offers high-bandwidth, low-latency connectivity, boosting overall data center performance.

-

Provides up to 4x faster data transfer speeds compared to PCIe.

-

Eliminates CPU-memory bottlenecks, accelerating data processing.

-

Particularly effective for real-time AI and machine learning workloads.

c.3 Flexibility and Scalability

-

CXL provides flexible architecture in connecting CPUs, memory, and accelerators.

-

Resources such as memory and compute power can be dynamically allocated.

-

Maintains compatibility with PCIe infrastructure while supporting upgrades and new features.

c.4 Cost Reduction

-

Efficient memory management and sharing help reduce server operation costs.

-

Enhances performance while reusing existing hardware, reducing initial investment.

-

Resource allocation based on need helps optimize overall costs.

0 Comments